Many apparent cognitive biases can be explained by a strong desire to look good and a limited ability to lie; in general, our conscious beliefs don’t seem to be exclusively or even mostly optimized to track reality. If we take this view seriously, I think it has significant implications for how we ought to reason and behave.

(See also: overcoming bias as a red queen’s race, NearFar at Overcoming Bias, Eliezer’s recent Facebook post.)

I.

Let’s start with an easy example.

Suppose that I’m planning to meet you at noon. Unfortunately, I lose track of the time and leave 10 minutes late. As I head out, I let you know that I’ll be late, and give you an updated ETA.

In my experience, people—including me—are consistently overoptimistic about arrival times, often wildly so, despite being aware of this bias. Why is that?

(The same model can also be applied to other time estimates, which seem to be similarly biased. Everything takes longer than you expect, even after accounting for Hoftstadter’s law.)

My total delay is the sum of two terms:

- Error: How badly I messed up my departure time. If this term is large then it signals incompetence and disrespect.

- Noise: How unlucky I got with respect to traffic (and other random factors). This doesn’t really signal much.

When I arrive at 12:10, I want you to attribute the delay to noise rather than error.

If I tell you a 12:05 ETA and you believe it, then you’ll attribute 5 minutes to error and 5 minutes to noise. If I tell you a 12:10 ETA and you believe it, then you’ll attribute 10 minutes to error and 0 minutes to noise. I’d prefer the first outcome.

At the same time, if my ETA is inaccurate, I’ll pay a social cost for being incompetent/disrespectful in a different way.

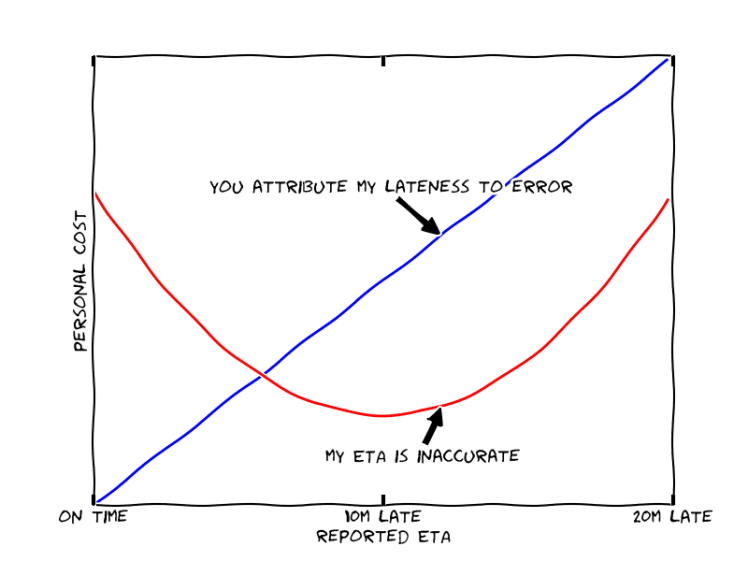

So my loss function is a sum of two terms:

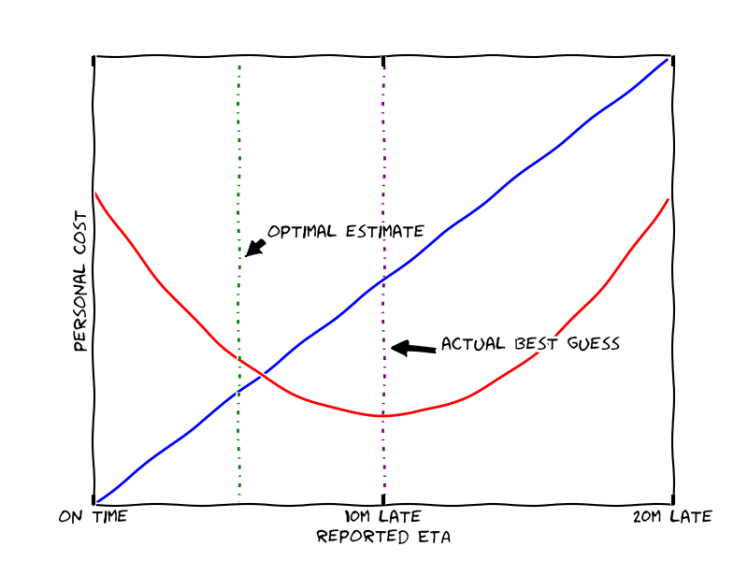

In this example I expect to arrive 10 minutes late, and so predicting “10 minutes late” minimizes the expected cost from the error in my ETA (the red curve).

But in order to minimize my total cost, I would pick the point where the two curves have opposite slopes—in this case, predicting “5 minutes late.”

In fact, this procedure always results in distorted estimates, no matter how large we make the penalty for bad predictions. This is because the costs for deviating from the optimum prediction are second order—they depend on the square of the deviation. So doubling the costs of bad predictions halves the size of the optimal distortion, and never drives it to zero.

(You might wonder why the red curve is shaped like a U, rather than a V; what if the cost of inaccuracy is |prediction-reality|? The issue is that I don’t know exactly when I’ll arrive. So even if the cost is |prediction-reality|, I need to average over the possible different realities, and I get back to a U.)

II.

One aspect of this story is missing: why should these biases affect people’s beliefs? Shouldn’t the rational agent say they will be 5m late, but believe they will be 10m late?

Humans seem to be terrible at lying. If we want other people to think that we believe X, the easiest approach is to actually believe X.

I don’t have a great story for why lying should be so hard and/or aversive. It might be that maintaining two narratives is too complex; it may be something completely different. In any case, the difficulty of lying seems to be a fundamental and widely-recognized fact about human nature.

Given that humans are bad at lying, and that their brains are highly optimized, we should expect their beliefs to be optimized in order to be presentable, as well as to be accurate: if I am unable/unwilling to lie about my ETA, then I should expect to actually believe that I’ll get there in 5 minutes.

(There are many instances where people lie successfully. But it seems to involve significant costs, and the truth often leaks, if only via weak statistical cues. So I expect our brains to be optimized to avoid it where possible, especially in cases where there isn’t a plausible cover story about how our lie is good for the person we are lying to.)

III.

I think that the same story explains or partially explains many biases:

- Overconfidence: Competent people will rationally be more confident. So if I’m confident, it is totally rational for other people to infer that I’m more likely to be competent. Moreover, observed performance doesn’t totally screen off this effect, because noise also affects performance. So overconfidence is rational.

- Epistemic overconfidence: Suppose that I answer a question and then am asked to estimate the probability that I am correct. It is rational to overestimate my own probability of being correct, so that others will (rationally) believe that I am better-informed.

- Hindsight bias: Suppose that I know that X actually occurred, and now I’m trying to estimate the probability that I would have assigned to X in advance. A cleverer or better-informed person would on average have assigned a higher probability to X, since it’s the truth. So if I want to convince you that I am clever and well-informed, it is rational for me to overestimate the probability that I would have assigned to X.

- Confirmation bias: Suppose that in the past I’ve claimed X. In particular, this implies a claim that new evidence will systematically support X (given my background views). So if I encounter some evidence against X, it is rational to downplay that evidence in order to make my past predictions and judgments look better.

- Sunk cost fallacy: Suppose that I’ve payed a large cost to obtain the option to do X. If I don’t do X, then onlookers will correctly infer that I made a mistake in the past and think worse of me.

By considering additional ways in which accuracy trades off against signaling effects, we could explain an even broader range of biases.

My sense is that under typical conditions, signaling tends to be relatively important compared to accuracy. So we shouldn’t feel too surprised if such signaling explanations correctly predict systematic biases (though this is a retrodiction, not a prediction).

Moreover, I’m not aware of many cases where the simple signaling story confidently predicts a bias in the opposite direction from what is observed in practice. (It would be great to find examples of this.)

IV.

To summarize/generalize: the beliefs I’m consciously aware of are themselves highly optimized. Reflecting reality is a useful property for conscious beliefs. But if we can’t lie very well, then adopting particular conscious beliefs has important signaling effects. These need to be traded off against a desire for accuracy, often leading to biased beliefs.

Personally, I don’t care very much about simple cases like predicting arrival times. They are interesting mostly because the existence of bias is so easy to observe and hard to deny. I think the same process is probably at work in more complex and realistic cases where it is harder to notice but much more important.

That is, we know that people make predictably terrible predictions about events where their prediction accuracy is going to get evaluated in ten minutes. Overcoming this bias seems to require significant effort or an unusual cultural context. So what do we expect to happen when people start speculating about e.g. the far future, or ethics, or the efficacy of hard-to-test interventions? Here there is basically no social incentive for accuracy, and I expect our beliefs to be much more unmoored from reality.

Overall I find this model of bias quite compelling:

- It accounts nicely for a bunch of disparate biases in simple situations.

- It rings true as a description of my own behavior.

- It seems to make consistently better predictions about what other people will do. Rationalists are quick to adopt a perspective like “the world is mad,” and to explain errors by postulating a difference between the modern world and evolutionary environment. I think that many of these observations are better explained by this model.

- This model is pretty likely given my other beliefs, namely that: (a) human behavior is optimized in ways they aren’t consciously aware of, (b) humans care a lot about what other people think of them, (c) humans are not good at consciously lying.

V.

Beliefs about what I want are a particularly important kind of conscious beliefs: they have an unusually direct on my behavior, and for the same reason they have unusually large signaling effects.

I don’t think there is a simple fact of the matter about what I want. There are at least two perfectly good and important concepts.

- What my decisions are optimized for. If I’m deciding between X and Y, and my decisions are optimized for Y, then I’ll pick Y—unless being the kind of person who picks X has good consequences.

- What I consciously believe I want. If I’m consciously deciding between X and Y, and I consciously believe that I want X, then I’m liable to pick X—even if my decisions are actually optimized for Y.

Let’s call these type 1 and type 2 preferences. According to the story I’m telling here, they come apart largely because of the difficulty of lying.

Which of these is what I “really” want? Obviously it depends on how you ask. There are two optimization processes at work here—the process that optimized my decision, and my conscious optimization—and they have different objectives. Let’s call these the type 1 and type 2 optimization processes.

Whenever there are multiple optimization processes with conflicting aims, I think the first order of business is capturing the gains from trade. In this case the gains from trade are massive: conscious and unconscious reasoning are both very powerful. When they are working together they can do pretty impressive things; when they are in conflict it’s kind of a mess.

Some type 1 optimization is done over evolutionary time, and is now embodied in a huge network of heuristics that have historically advanced our type 1 preferences. This is a hard optimization process to bargain with.

But a lot of type 1 optimization is done by goal-directed subconscious reasoning, or by simple reinforcement learning algorithms that can implement surprisingly sophisticated and flexible goal-directed behavior. We can hope to trade with this part of the type 1 optimization.

If doing what I “ought to do” reliably leads to things that I type-1-want, then over time I think this will mechanically increase the frequency with which I do what I “ought to do.” And if a particular explicit plan is a reasonable compromise between type 1 and type 2 preferences, I think that I’m more likely to do it well. Those are precisely the fruits of bargaining.

As I see it, I can implement bargaining in two main ways:

- Apply my conscious reasoning to pursue a better compromise between type 1 and type 2 preferences.

- Consciously make concessions to our type 1 preferences when I unconsciously make concessions to my type 2 preferences (e.g. when I work hard on projects that are much more important on type 2 than type 1 preferences, or when I make accurate predictions in cases where type 1 incentives for accuracy are low).

Instead of bargaining, you could try to just overpower type 1 optimization. Conscious optimization is in some sense smarter than unconscious reasoning, and simple RL algorithms hardly seem like a worthy adversary for our best-laid conscious plans.

I think that this is probably a mistake. This isn’t a fight you can win using modern technology. First, I think it’s really easy to underestimate the power of the optimization we do subconsciously, and more generally to underestimate evolution’s handiwork (I think that rationalists are especially prone to this). Second, even if the underlying RL algorithms are dumb as bricks, you are stuck with them, and over the long run they will be able to tell if deferring to conscious reasoning yields better outcomes than not doing so.

So in the end, I think that “what we ought to do” ought to be a bargain between type 1 and type 2 preferences. We ought to maintain separate mental concepts for type 1 preferences, type 2 preferences, and compromise preferences.

VI.

The main problem with cognitive biases isn’t that our brains are failing at their job. The problem is that our beliefs are optimized exclusively according to our type 1 preferences, where we ought to be pursuing a compromise between type 1 and type 2 preferences.

Sometimes this isn’t a big deal. When estimating arrival times it’s not clear that there is very much divergence. Indeed, I suspect that the type 1 optimization is responding to considerations that our type 2 optimization doesn’t understand well, and that in many cases the “biased” answer may at least as good as an accurate answer even according to compromise values.

But sometimes this is a huge deal. When I am thinking about distant or abstract topics, actual accuracy is not especially type-1-important but may be exceptionally type-2-important. If I have totally inaccurate views about what the future of AI will look like, or the moral importance of the far future, or the consequences of rising inequality, or the effectiveness of educational interventions… that could easily make my philanthropic work worse than useless.

If we are going to pursue a compromise between type 1 and type 2 preferences, we ought to be highly skeptical of biased answers in these distant or abstract cases.

We could try to improve accuracy by increasing the type 1 incentives for accuracy. In some contexts I think that this is reasonable, but in other cases I think that noise or long delays make it totally unrealistic to provide large enough incentives to overwhelm signaling incentives.

We might also get some mileage simply by making the bargain described in the last section: if having more accurate beliefs causes me to get what I type 1 want, I think my beliefs will naturally become more accurate.

But I think the most powerful tool is adopting epistemic norms which are appropriately conservative; to rely more on the scientific method, on well-formed arguments, on evidence that can be clearly articulated and reproduced, and so on. This is a big handicap for our collective discourse, but I’m not sure there is an attractive alternative (and it’s a huge step over the human default).

Using conservative epistemology become more important as we consider more distant topics—it seems fine and even necessary to use raw intuitions to decide what research directions to pursue this hour, or what arguments to explore in order to improve our views. But it seems ill-advised to use raw intuitions to decide what research directions to pursue this decade.

This isn’t just important when we are interacting with other people, I don’t think that we should trust our own brains either. Working things out explicitly, with deduction and calculation and reason, is not merely useful because our brains can’t do it on their own—it’s also useful because in an important sense they don’t want to.

If we exercise the discipline of reasoning things out explicitly and allowing that reasoning to shape our conscious views, I think that two things will happen:

- Good news: our unconscious views will eventually give up, and start to better the conclusions we would arrive at eventually.

- Bad news: we will eventually develop a strong aversion to reasoning things out in cases where our intuitions are biased.

The main way I see to avoid the bad news is to cultivate community norms that create type 1 incentives for working things out explicitly, and to construct the bargain such that opting in to / enforcing those norms is good according to our type 1 as well as our type 2 preferences.

If we try to adopt accuracy-enhancing norms as a coup of type 2 optimization against type 1 optimization, I expect it to be a bloodbath. But in fact the gains from trade are massive, and I suspect that we can make everyone happy.

Conclusion

I think that our beliefs are in large part optimized to look good, and that this is an essential observation for any serious attempt to think about our own thinking and how to improve it.

Our beliefs about what we want are also optimized to look good, and this leads to several different notions of “what we want.” I suspect that it is possible to simultaneously get more of everything we want, by implementing a better compromise between these different notions.

Part of this compromise involves improving our beliefs about distant and abstract topics. I think the most promising mechanism is to adopt epistemic norms that promote truth even when many of our intuitions may be optimized for looking good.

Many of these ideas are already floating around the rationalist/EA communities, and I think that these communities are largely valuable to the extent that they are implementing this project. By having a better explicit understanding of the project, I hope that we can do better.

Not coherent.

I disagree that you should compromise. I think that you should let your type 1 decide what you do. I don’t think doing what type 2 wants gives you much good feeling, maybe the short-lived rush of empty accomplishment. I think type 1 should decide what my goals and values are, and type 2 is its tool and honest helper. Type 1 might want to use the ideas of type 2 sometimes, and that’s the use of type 2, not to actually decide for you overall most of the time.